Recently, two entities have asked us to help them host their DNS zones and in both cases, we were happy to oblige. One of them was the Czech neutral peering node NIX.CZ, with which we often share technical know-how and help each other when it makes sense. The other one was the domain register of Guatemala operating the .gt ccTLD, which we humored as part of our long-term support of developing registers, like we have done the case with the registers of Angola, Malawi, Tanzania or North Macedonia.

In the case of NIX.CZ, there were approximately twenty second-level domains (SLD) in question and the motivation was to consolidate the existing infrastructure in another data center where they operate the slave DNS instance in another autonomous system.

The Guatemalan registry wanted to extend the availability of its TLDs and SLDs to other DNS nodes running around the world. The registry manages eight zones, with a total of approximately 20,000 domain names.

In this post, I will try to describe the technical aspects of such cooperation.

DNS infrastructure

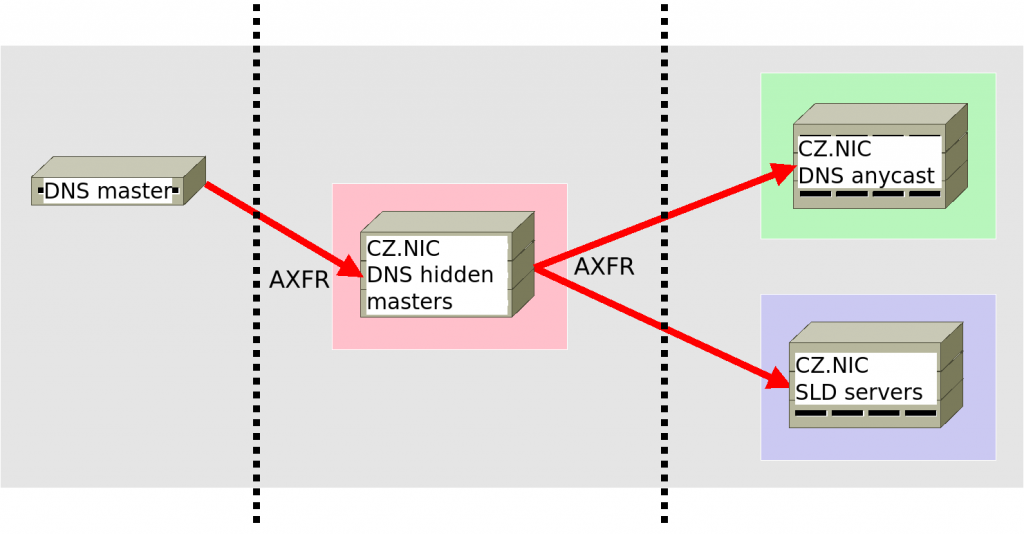

First of all, it is necessary to determine at which DNS slave instances the required domains should be available. We distinguish two environments: DNS anycast and SLD servers, which differ in the number of servers (computing power), geographical location and required availability.

Anycast DNS is our main DNS environment where we operate the .CZ ccTLD and the most important second-level domains (e.g. nic.cz, mojeid.cz etc.) and it is available on four independent IP ranges, which are denoted by letters A to D. Within the anycast, individual servers in various locations around the world announce the IPv4 prefix /24 + IPv6 prefix /48 using BGP protocol and rerouting DNS traffic to themselves. The anycast DNS includes more than 100 servers, on which we operate various DNS daemons (KNOT, BIND, NSD) and routing daemons (BIRD, QUAGGA, FRROUTING) to keep diversity. ccTLD zones, we are hosted using reserved IP addresses from the D range of the anycast.

In turn, we use SLD servers for other second-level domains and they are standalone DNS servers without routing daemons. Each of our two Prague data centers houses such a server and one more is located outside our network, within another autonomous system. Unlike anycast DNS, the servers are not operated in foreign locations and we maintain the diversity of DNS daemons here as well.

So why do we have two environments when anycast DNS alone would be enough? The reason is the relatively time-consuming process of adding/removing even one DNS zone on anycast DNS and the risk of an error, which, given the highest importance of this system, could have a fatal impact on the operation of the .CZ domain. Managing domains on SLD servers, on the other hand, would take our administrators less time and the potential negative impact of a configuration error is also smaller. Managing zones on anycast DNS infrastructures means adjusting the configurations on 100+ servers one by one, always carefully confirming whether the change was successful. Although it is DNS anycast, no administrator would dare to configure and reload DNS zones on all servers at once.

DNS server configuration changes

We have already cleared up which DNS environment will be used for each zone. We will operate the .gt ccTLD zones on the whole D-anycast, similar to .mk, .tz, .ao or .mw ccTLDs while, the second-level NIX.CZ domains will be available only on SLD servers.

What steps are needed to get zones to DNS servers?

First, we have to configure our hidden master (HM) servers. These servers run “hidden” within our network, keep zone files up to date and send them to all anycast DNS servers or SLD servers

The steps are as follows:

- We communicate the IP prefixes of our HM servers, which the entity will whitelist on their side in the firewall thus to allow us to update the zones.

- We obtain the IP addresses of the DNS master from where we will download the zone contents via AXFR to our HM servers.

- We agree on a TSIG key to keep the of zone transfer secure.

- We will try to obtain the zone, for example, by using the dig utility:

hm> dig @DNS_MASTER axfr TLD -y TSIG_ALG:TSIG_KEYNAME:TSIG_SECRET

- We prepare configuration files for individual domains.

Once all the required zones appear on our HM servers, we can prepare a configuration for the destination DNS servers that will host these zones for users on the Internet. Due to different DNS daemons, we have prepared individual roles in our Ansible, which, depending on the daemon, will prepare final configurations for each zone so that DNS servers get zone updates directly from our HM servers. Then we just need to run Ansible playbooks on the required servers.

The following figure shows a simplified zone update workflow.